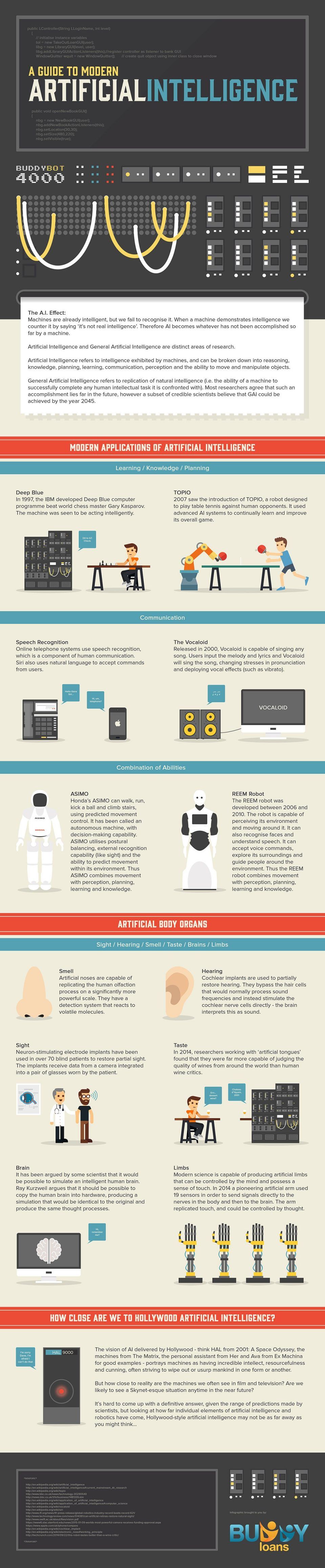

Modern Artificial Intelligence Infographic

The history of Artificial Intelligence isn't a long one, around 60-70 years, but the advances in recent years has been huge. The Modern Artificial Intelligence Infographic shows how technology coupled with studies of the human brain have aided in making AI a reality, and a reality we can use everyday.

Machines are already intelligent, but we fail to recognise it. When a machine demonstrates intelligence we counter it by saying ‘it’s not real intelligence’. Therefore Al becomes whatever has not been accomplished so far by a machine.

Artificial Intelligence and General Artificial Intelligence

Artificial Intelligence and General Artificial Intelligence are distinct areas of research.

Artificial Intelligence refers to intelligence exhibited by machines, and can be broken down into reasoning, knowledge, planning, learning, communication, perception and the ability to move and manipulate objects.

General Artificial Intelligence refers to replication of natural intelligence (i.e. the ability of a machine to successfully complete any human intellectual task it is confronted with). Most researchers agree that such an accomplishment lies far in the future, however a subset of credible scientists believe that GAI could be achieved by the year 2045.

Modern Applications of Artificial Intelligence

1. Learning / Knowledge / Planning

- Deep Blue

In 1997, the IBM developed Deep Blue computer programme beat world chess master Gary Kasparov. The machine was seen to be acting intelligently. - TOPIO

2007 saw the introduction of TOPIO, a robot designed to play table tennis against human opponents. It used advanced Al systems to continually learn and improve its overall game.

2. Communication

- Speech Recognition

Online telephone systems use speech recognition, which is a component of human communication. Siri also uses natural language to accept commands from users. - The Vocaloid

Released in 2000, Vocaloid is capable of singing any song. Users input the melody and lyrics and Vocaloid will sing the song, changing stresses in pronunciation and deploying vocal effects (such as vibrato).

3. Combination of abilities

- ASIMO

Honda’s ASIMO can walk, run, kick a ball and climb stairs, using predicted movement control. It has been called an autonomous machine, with decision-making capability. ASIMO utilises postural balancing, external recognition capability (like sight) and the ability to predict movement within its environment. Thus ASIMO combines movement with perception, planning, learning and knowledge. - REEM Robot

The REEM robot was developed between 2006 and 2010. The robot is capable of perceiving its environment and moving around it. It can also recognise faces and understand speech. It can accept voice commands, explore its surroundings and guide people around the environment. Thus the REEM robot combines movement with perception, planning, learning and knowledge.

4. Artificial body organs

- Smell

Artificial noses are capable of replicating the human olfaction process on a significantly more powerful scale. They have a detection system that reacts to volatile molecules. - Sight

Neuron-stimulating electrode implants have been used in over 70 blind patients to restore partial sight. The implants receive data from a camera integrated into a pair of glasses worn by the patient. - Brain

It has been argued by some scientist that it would be possible to simulate an intelligent human brain. Ray Kurzweil argues that it should be possible to copy the human brain into hardware, producing a simulation that would be identical to the original and produce the same thought processes. - Hearing

Cochlear implants are used to partially restore hearing. They bypass the hair cells that would normally process sound frequencies and instead stimulate the cochlear nerve cells directly - the brain interprets this as sound. - Taste

In 2014, researchers working with ‘artificial tongues’ found that they were far more capable of judging the quality of wines from around the world than human wine critics. - Limbs

Modern science is capable of producing artificial limbs that can be controlled by the mind and possess a sense of touch. In 2014 a pioneering artificial arm used 19 sensors in order to send signals directly to the nerves in the body and then to the brain. The arm replicated touch, and could be controlled by thought.

How Close Are We To Hollywood Artificial Intelligence?

The vision of Al delivered by Hollywood - think HAL from 2001: A Space Odyssey, the machines from The Matrix, the personal assistant from Her and Ava from Ex Machina for good examples - portrays machines as having incredible intellect, resourcefulness and cunning, often striving to wipe out or usurp mankind in one form or another.

But how close to reality are the machines we often see in film and television? Are we likely to see a Skynet-esque situation anytime in the near future?

It’s hard to come up with a definitive answer, given the range of predictions made by scientists, but looking at how far individual elements of artificial intelligence and robotics have come, Hollywood-style artificial intelligence may not be as far away as you might think...

You can adjust your cookie preferences here.